The Linux Storage, Filesystem, Memory Management & BPF Summit (LSFMMBPF) brings together leading developers, researchers, and Linux kernel subsystem maintainers to discuss, design, and implement improvements to the filesystem, storage, and memory management subsystems. The work initiated at this event typically makes its way into the mainline kernel and Linux distributions within the following 24 to 48 months.

The Linux Storage, Filesystem, Memory Management & BPF Summit (LSFMMBPF) brings together leading developers, researchers, and Linux kernel subsystem maintainers to discuss, design, and implement improvements to the filesystem, storage, and memory management subsystems. The work initiated at this event typically makes its way into the mainline kernel and Linux distributions within the following 24 to 48 months.

In the context of the partnership between Bootlin, the eBPF Foundation and the Alpha Omega organization, Bootlin engineer Alexis Lothoré is currently working on adding KASAN memory checker support to the eBPF subsystem: this work aims to make it easier for eBPF subsystem developers to catch (early) bugs that could be introduced in complex parts of the kernel, like in the eBPF verifier.

This work has already led to some initial design discussions and proof of concept implementation on the kernel mailing lists, but as it requires some close collaboration with kernel developers and maintainers, Alexis has also been invited to attend LSFMMBPF 2026, taking place from May 4th to May 6th in Zagreb, Croatia, and will present his ongoing work on “Adding KASAN support for JITed programs”. This will be the opportunity to discuss and iron out the roadblocks and details to get the feature integrated in the kernel, as this event is not really a classic conference in which people primarily attend talks: the goal really is to trigger discussions to make solutions and features move forward in the kernel, in a “workshop-like” atmosphere. This work is one among many others on-going topics in the Linux kernel that need discussions, more details are available in the full event schedule.

We are proud to have one of our engineers contributing to this gathering of Linux kernel experts. This invitation highlights Bootlin’s strong involvement in upstream Linux kernel development.

Linux 6.19 was

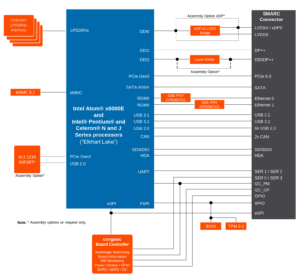

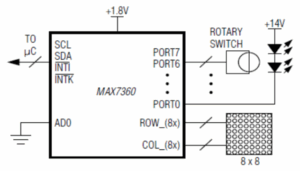

Linux 6.19 was  Among all activities I’ve been doing at Bootlin during the past few months, one has been to add support for the

Among all activities I’ve been doing at Bootlin during the past few months, one has been to add support for the