It is no mystery that cyber-security has become a highly important if not critical topic over the past few years. This naturally extends to embedded devices, including those running Linux and open-source software. For many years, Bootlin has been supporting its customers in implementing security features in embedded products: secure boot, encryption, Trusted Execution Environments, and more. We also help maintain Linux systems over time through CVE monitoring, long-term maintenance, and regular upgrades of Linux BSP components.

It is no mystery that cyber-security has become a highly important if not critical topic over the past few years. This naturally extends to embedded devices, including those running Linux and open-source software. For many years, Bootlin has been supporting its customers in implementing security features in embedded products: secure boot, encryption, Trusted Execution Environments, and more. We also help maintain Linux systems over time through CVE monitoring, long-term maintenance, and regular upgrades of Linux BSP components.

However, Bootlin’s DNA has never been limited to providing engineering services where we simply “do the work” for our customers. Sharing knowledge and empowering engineers to build and maintain their own systems is at the core of what we do.

Based on this philosophy and our security expertise, we are excited to announce a brand new training course: Embedded Linux Security.

This course covers a wide range of topics essential to securing Linux-based embedded devices: fundamental security concepts, hardware-enforced security mechanisms, secure boot, data confidentiality and encryption, key management, user-space security, measured boot, system maintenance, regulatory compliance, and secure update strategies. We have brought together all the key building blocks needed to design and maintain a secure embedded Linux system in a single, comprehensive course. You can review the complete training agenda for full details.

This course covers a wide range of topics essential to securing Linux-based embedded devices: fundamental security concepts, hardware-enforced security mechanisms, secure boot, data confidentiality and encryption, key management, user-space security, measured boot, system maintenance, regulatory compliance, and secure update strategies. We have brought together all the key building blocks needed to design and maintain a secure embedded Linux system in a single, comprehensive course. You can review the complete training agenda for full details.

Our launch customer for this course is EBV Elektronik, for whom we will deliver the very first session in Spain mid-May. In line with Bootlin’s open-source culture, we will publish the complete training materials, free of charge and under an open-source license, shortly after this first session, adding them to the materials of our nine other training courses already freely available.

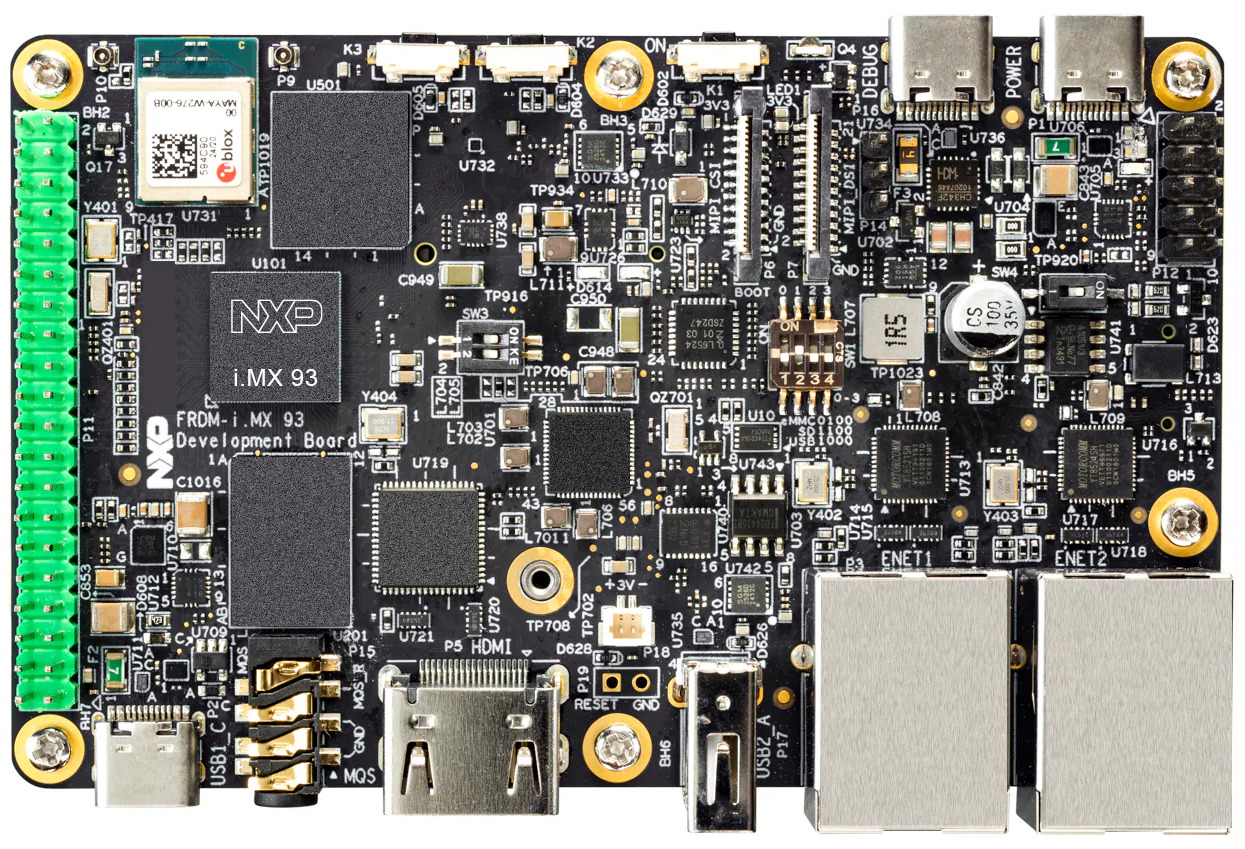

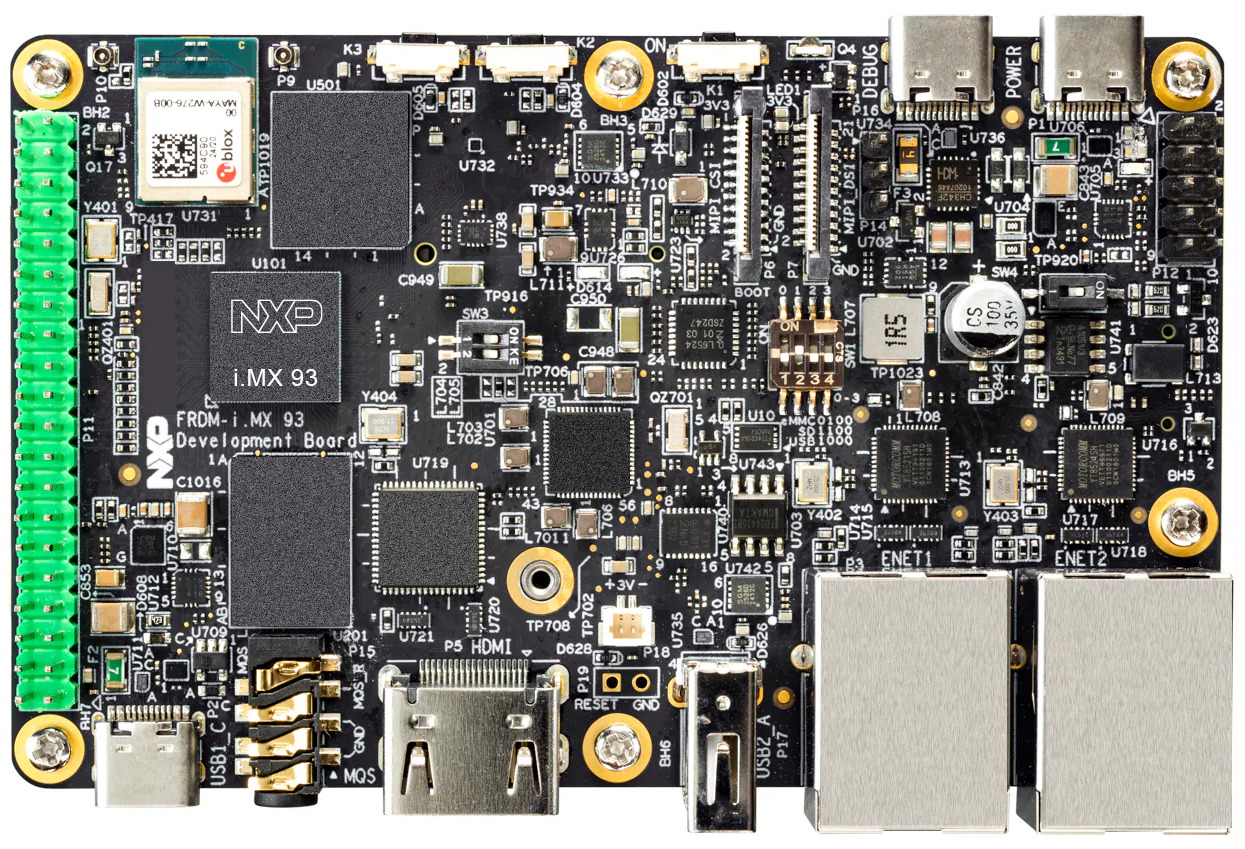

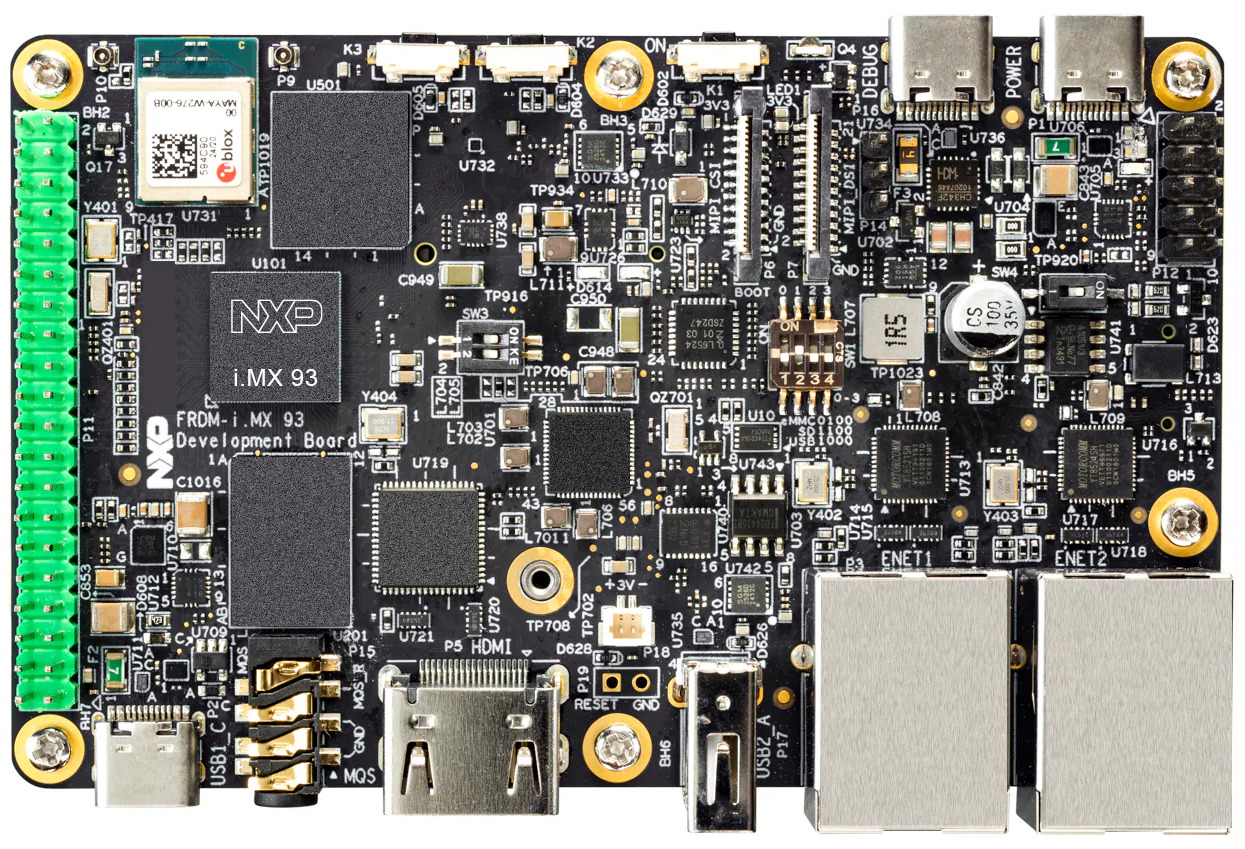

Like most of our training courses, this one combines lectures with practical labs. The lectures are hardware-agnostic, providing knowledge applicable across platforms, with a natural focus on ARM-based systems. For the hands-on labs, we use the NXP i.MX93 FRDM, a platform offering strong security features and solid upstream software support.

Like most of our training courses, this one combines lectures with practical labs. The lectures are hardware-agnostic, providing knowledge applicable across platforms, with a natural focus on ARM-based systems. For the hands-on labs, we use the NXP i.MX93 FRDM, a platform offering strong security features and solid upstream software support.

If you are interested in attending this course, several options are available:

- Join one of our first public online sessions, delivered by the Bootlin engineers who designed the course:

- Organize a private in-person session at your location – contact us

- Organize a private online session dedicated to your team – contact us

We hope this new training will be useful to engineers and teams working on securing their embedded Linux systems, whether they are just getting started or already tackling advanced topics. We also hope to see many of our readers join one of the upcoming sessions, and we look forward to your feedback on this new course!

The buildroot-external-st project is an extension of the Buildroot build system with ready-to-use configurations for the STMicroelectronics STM32MP1 and STM32MP2 platforms.

The buildroot-external-st project is an extension of the Buildroot build system with ready-to-use configurations for the STMicroelectronics STM32MP1 and STM32MP2 platforms.

Bootlin’s

Bootlin’s  As part of our ongoing effort to improve and modernize our training offering, our three most popular training courses are now available on the

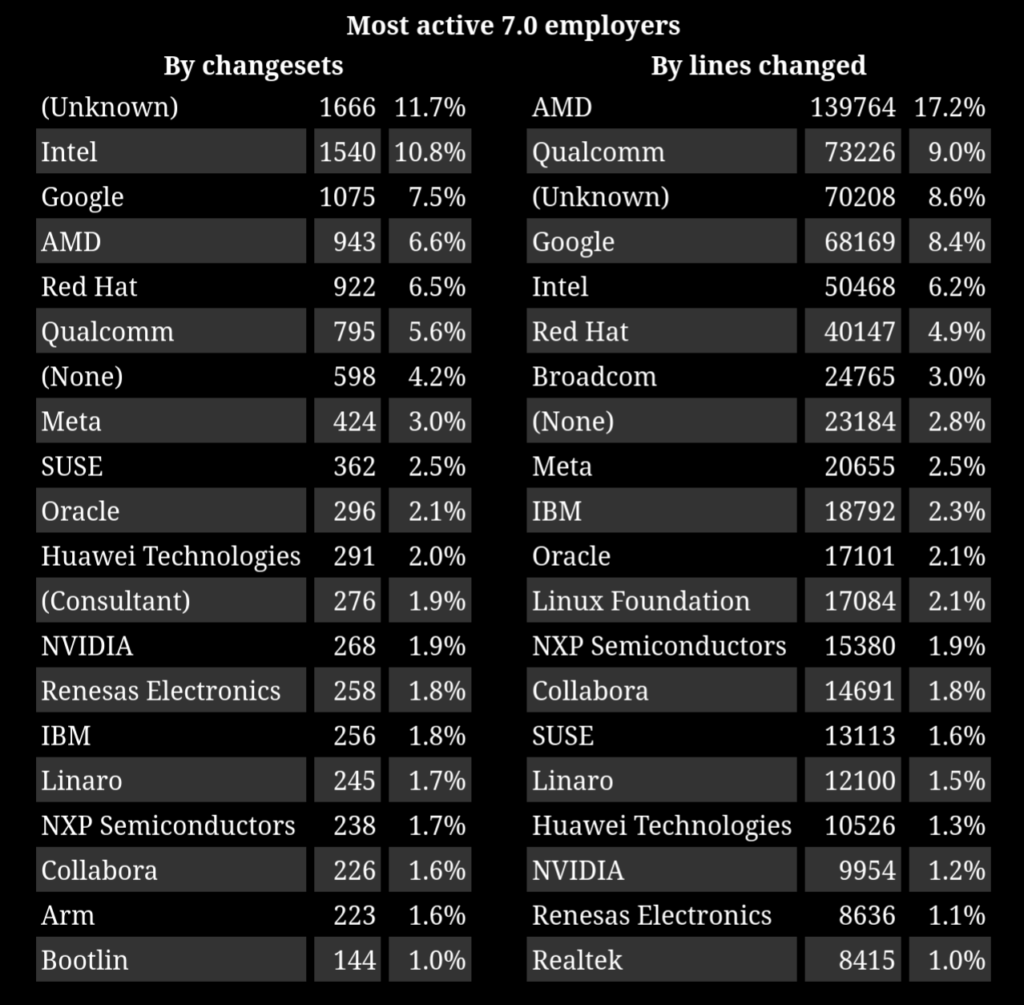

As part of our ongoing effort to improve and modernize our training offering, our three most popular training courses are now available on the  Linux 7.0

Linux 7.0

Like most of our training courses, this one combines lectures with practical labs. The lectures are hardware-agnostic, providing knowledge applicable across platforms, with a natural focus on ARM-based systems. For the hands-on labs, we use the

Like most of our training courses, this one combines lectures with practical labs. The lectures are hardware-agnostic, providing knowledge applicable across platforms, with a natural focus on ARM-based systems. For the hands-on labs, we use the