Introduction

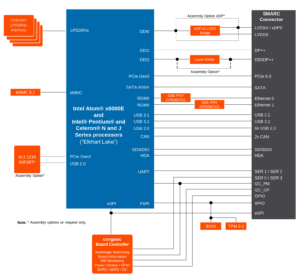

Congatec’s x86 System-on-Modules (SoM) include a Board Controller component connected to the processor via an eSPI bus, and providing various features such as I²C buses, GPIOs, a watchdog timer, and various sensors for monitoring voltage, fan speed, and more.

For their x86 System-on-Modules (SoMs), Congatec provides a Yocto meta-layer: meta-congatec-x86. This meta-layer includes, among other components, a driver, a library, and tools for interfacing with the Board Controller.

The primary issue with the provided driver is its deviation from standard Linux APIs. It exposes a custom character device and relies on custom ioctl() for communication with userspace. This non-standard approach leads to compatibility and portability challenges. For example, an application using the standard Linux GPIO API would need to be adapted to access the GPIOs from Congatec’s Board Controller. Similarly, software designed specifically for the Board Controller’s GPIOs would require changes to work with GPIOs on other platforms.

Additionally, because the driver is out-of-tree, it raises concerns about long-term support and maintainability. Questions naturally arise—will the driver be regularly updated to remain compatible with future Linux kernel versions, given the instability of internal APIs? Will bugs and security vulnerabilities be addressed in a timely manner?

One of our customers, planning to use the Conga-SA7 board in a commercial product, recognized these challenges early on. As a result, they asked us to integrate support for the Congatec Board Controller directly into the mainline Linux kernel. Upstreaming the driver into the kernel would eliminate these issues by ensuring better portability, long-term maintenance, and community support.

Continue reading “Congatec Board Controller support into the upstream Linux Kernel”