The end of August has arrived, bringing an end to Paul’s engineering internship at Bootlin, focused on bringing mainline Linux support for the VPU found on Allwinner platforms. Over the past six months, we have worked hard to reach the goals announced in the project’s crowdfunding campaign and we were able to deliver most of the main goals last month.

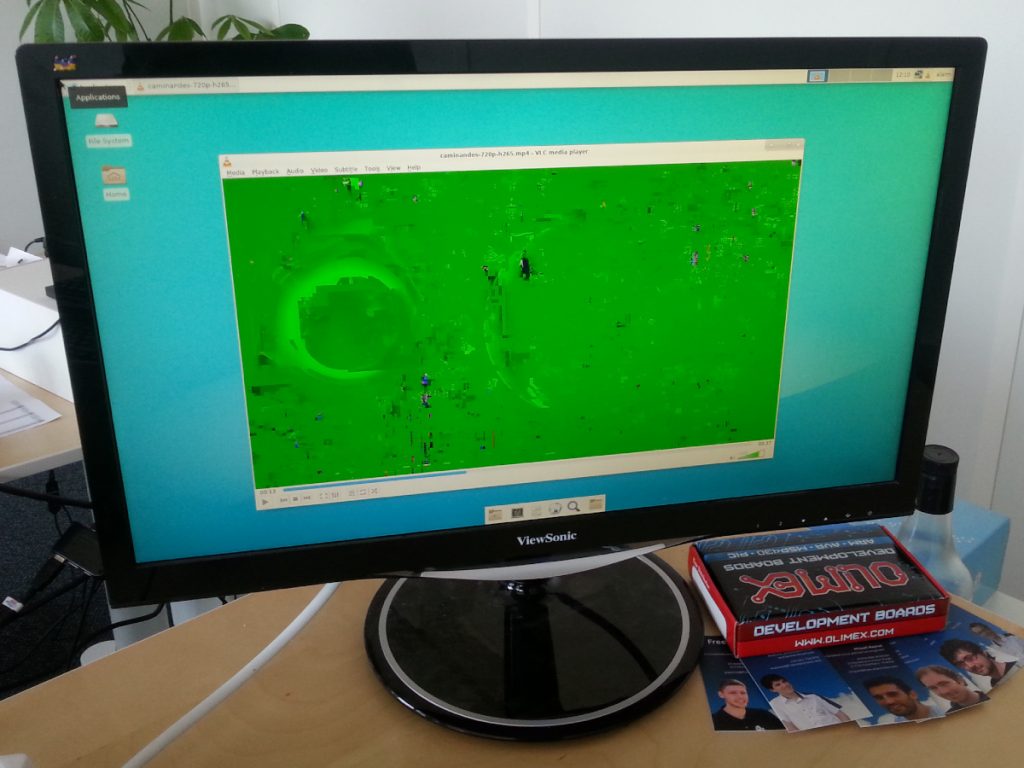

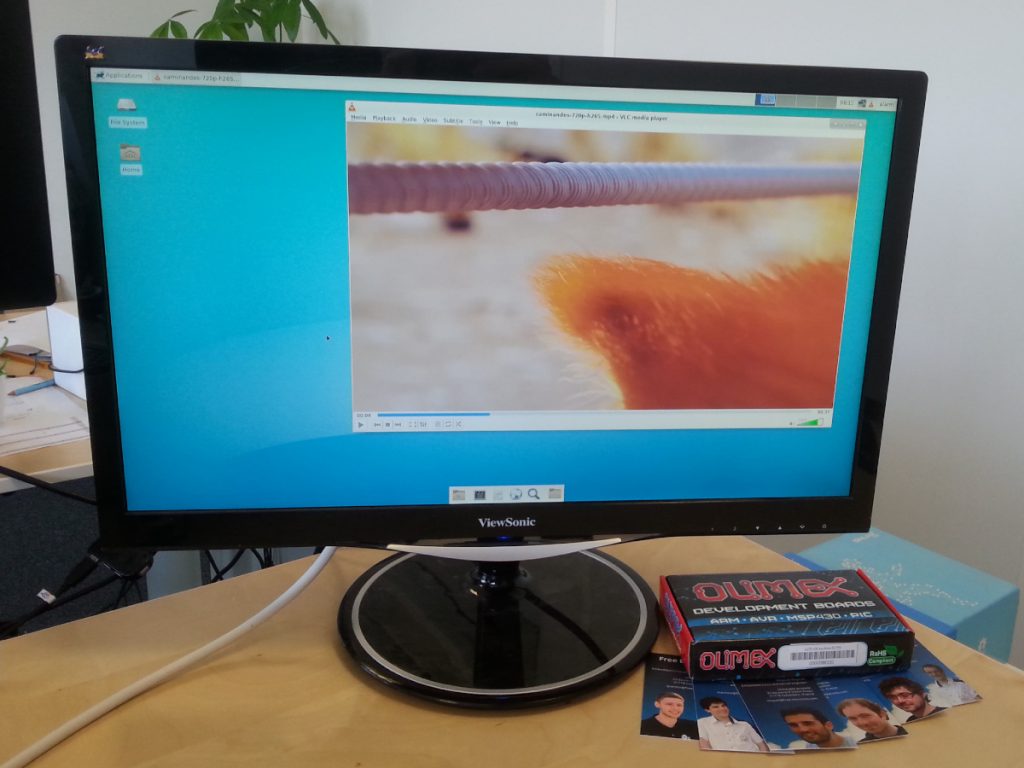

Since last month delivery, we made great progress on supporting the H265 codec, one of the stretch goals that were funded during the campaign. A dedicated patch series introducing support for it was submitted to the linux-media mailing list earlier this week, as well as a new iteration of the base Cedrus VPU driver. As the Request API is on the verge of integrating the Linux kernel, our VPU driver should follow pretty soon.

Reaching the end of the funding: a status on where we stand

We have now exhausted the budget that was provided through the crowdfunding campaign: both Maxime Ripard’s time (who worked mainly on the H264 decoding and helping with DRM topics) and Paul’s internship are over, and therefore the remaining work will be done on a best-effort basis, without direct funding. This will therefore be the last weekly update, but we will be publishing updates once in a while when interesting progress is made.

Here is a quick summary of our current status, compared to what was promised during our Kickstarter campaign:

- Making sure that the codec works on the older Allwinner SoCs that are still widely used: A10, A13, A20, A33, R8 and R16. This goal is fully met;

- Polishing the existing MPEG2 decoding support to make it fully production ready. This goal is fully met;

- Implementing H264 video decoding. This goal is fully met with base H264 decoding support implemented. However, a number of more advanced H264 features have not been implemented, and therefore additional improvements could be made;

- Modifying the Allwinner display driver in order to be able to directly display the decoded frames instead of converting and copying those frames. This goal is fully met.

- Providing a user-space library easy to integrate in the popular open-source video players. This goal is partially met. We do provide a user-space library that offers a VA-API implementation, however the integration with popular video players turned out to be a lot more challenging than expected, and we only offer Kodi integration at this point. See below for details;

- Upstreaming those changes to the official Linux kernel. This goal is in progress, on both the VPU driver side and DRM improvements side;

- Supporting the newer Allwinner SoCs (H3, H5, A64). This goal is partially met, since H3 is supported, but not yet H5 and A64;

- H265 video decoding support. This goal is fully met with base H265 decoding support implemented. Like H264, a number of more advanced features have not been implemented, so there is room for more work.

The most challenging topic: integration with open source video players

The major pitfalls that we encountered are related to integrating our accelerated video decoding pipeline with multimedia players. They will require extra work out of the scope of the VPU campaign to reach a production-ready state.

We considered a number of options for integrating with a desktop environment under Xorg, which was especially tricky for the oldest Allwinner platforms where the VPU outputs a tiled YUV format. The chain of required operations includes untiling, colorspace conversion (from YUV to RGB), scaling and composition.

- We first resorted to the main CPU for all the required operations (including NEON-backed untiling routines), which becomes unbearably slow as soon as scaling is involved in the process.

- We tried to bring-in the GPU for accelerating the untiling, colorspace conversion, scaling and composition operations involved. Although we wrote a shader-based untiler, the Mali blobs did not allow for importing the raw frame data on a byte-by-byte basis. This made GPU acceleration unusable for our use case in practice. Bringing-in the GPU for the final composition step only (that should be possible with GBM-enabled blobs) could however bring some speedup.

- Another lead is to use the Xv extension of the X11 API, that fits the bill for using the Display Engine hardware to accelerate these operations, but this interface is quite old now and increasingly deprecated. It also only allows sub-optimal use cases, with one video at a time.

We also investigated the situation for media players that can run without a display server, which removes the need for the composition step and allows using the Display Engine hardware directly, through the DRM interface.

- We succeeded at bringing up support for the Kodi mediacenter, by adding the required bits to implement a zero-copy pipeline.

- We worked on getting GStreamer to correctly pipe VAAPI-based decoding to the DRM-enabled kmssink without going through the GPU, but did not end up with any functional result, so significant work remains in that area.

Going further: what will happen now ?

Here are the topics that we intend to continue work on in this best-effort mode and complete by the end of 2018, as promised in our crowdfunding campaign:

- Ensure the base Cedrus Linux kernel driver gets merged;

- Ensure the H264 decoding support in the Cedrus driver gets merged;

- Ensure the H265 decoding support in the Cedrus driver gets merged;

- Ensure the DRM driver improvements get merged;

- Enable VPU support on H5 and A64.

Here are other topics that we do not intend to work on without additional funding. Individuals who want to see some progress on those topics are invited to contribute and join the effort of improving Allwinner VPU support in upstream Linux. Companies interested in those features can also contact us.

- Additional H264 and H265 decoding features: interlaced video support (H264 and H265), quantization matrices (H265), 10-bit (H265), 4K resolution (H265);

- Other codecs beyond MPEG2, H264 and H265, such as VP8;

- Encoding support;

- Additional work on GStreamer integration or X.org integration.

Thanks

Once again, we would like to thank all the individuals and companies who participated to our crowdfunding campaign, and made this project possible. We are very happy to see that despite the uncertainties involved in all software development projects, we have been to deliver the vast majority of the goals, within the expected time frame, while delivering weekly updates of our progress. It was a new experience for Bootlin, and we hope to renew this experience for other Linux kernel upstream developments in the future!